FOSDEM 26 - My Hallway Track Takeaways

Highlights from my trip to Brussels

2026 is my 3rd FOSDEM attendance. And like most veterans of the events, I started to appreciate the people more than the talks. The talks are still fire! Some are extremely impressive technically. But they can be rewatched with the VODs and Slides being widely available. It’s the people that you don’t get to meet face-to-face often. And this is the one time where the most interesting, talented folks gather in such high concentration.

So let’s write a quick recap of the vibes I got from FOSDEM 26, the Hallway Track (including lunches and dinners). For privacy reasons, I will not be naming names nor pointing to any specific companies or individuals (unless it’s already public information or coming from a talk).

Impact of Coding Agents

There is a clear division on AI at FOSDEM.

Many big EU organizations are uncomfortable with adopting Coding Agents (or AI in general). There are several reasons for this, ranging from privacy to environmental concerns. But the most front and center reason was security and sovereignty: they are not comfortable sending their Intellectual Property over the internet to American-owned companies to do the inference. The inference provider (and model provider to some extent) needs to be an EU-entity and won’t be pressured by any state actors in their decision-making.

But the smaller EU startups/hackers are not constrained by these requirements. To them, AI was a blessing. Many folks reported that they have stopped writing code directly and instead, switched to prompting 3-4 agents just in the last few weeks. They experienced faster Proof of Concepts, faster time-to-market, and higher productivity overall. What would have taken them 3-4 months, which they never had the time for and shelved the ideas, is now taking them a few days, if not just a few hours.

This division is a strange inflection point. My take on this is: the demand for AI is there, very real, and has monetary impacts. It calls for EU infrastructure to support AI demand and better funding to retain talent. But while the problem statement is clear, the solution remains murky at best. Some are advocating for deregulation to speed up the private sector, while others prefer more control over software service quality and a more centralized funding model to drive growth.

Version Control

I had to line up 15 minutes to be able to squeeze into Janson's main track, the biggest room at FOSDEM, to listen to Patrick Steinhardt’s talk about recent advancements in Git. The entire lecture hall was fully packed, with some folks standing on the side and at the back. There has never been more interest in version control systems.

In the VCS Bird of a Feather session, attended by engineers from big tech organizations, the problems of Git (and its ecosystem) started to reveal:

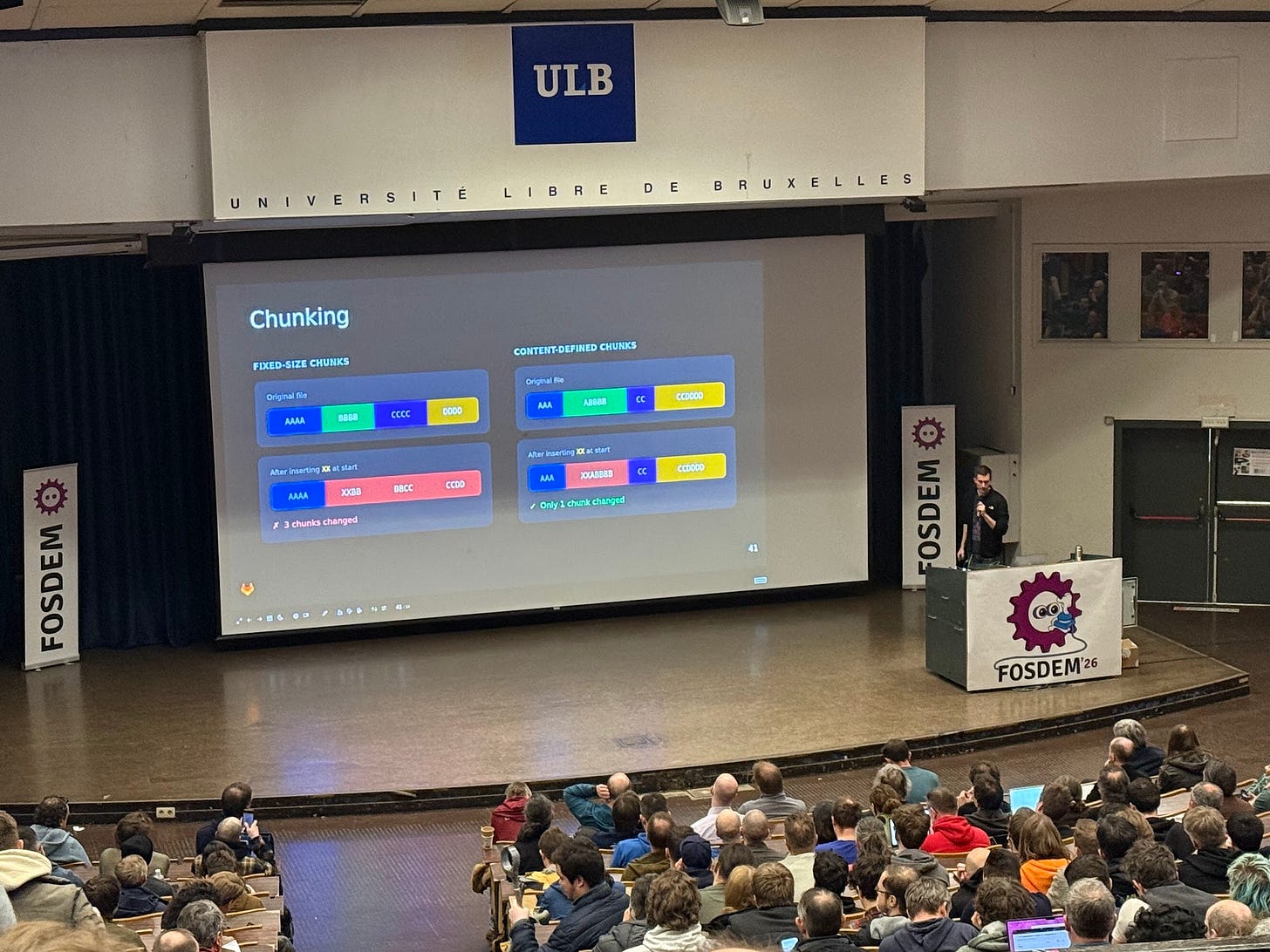

Gaming companies need a good solution to track non-source code data (i.e. graphic assets). Same with companies with big data sets that needed versioning. Patrick’s talk mentioned that Git plans to replace LFS with a Content-defined Chunking scheme and multi-tier promisor-based storage backend servers. However, there is no clear timeline for that goal, and it does not seem like all the Git forges (cough hub hub cough) are interested in backing such a project at this moment.

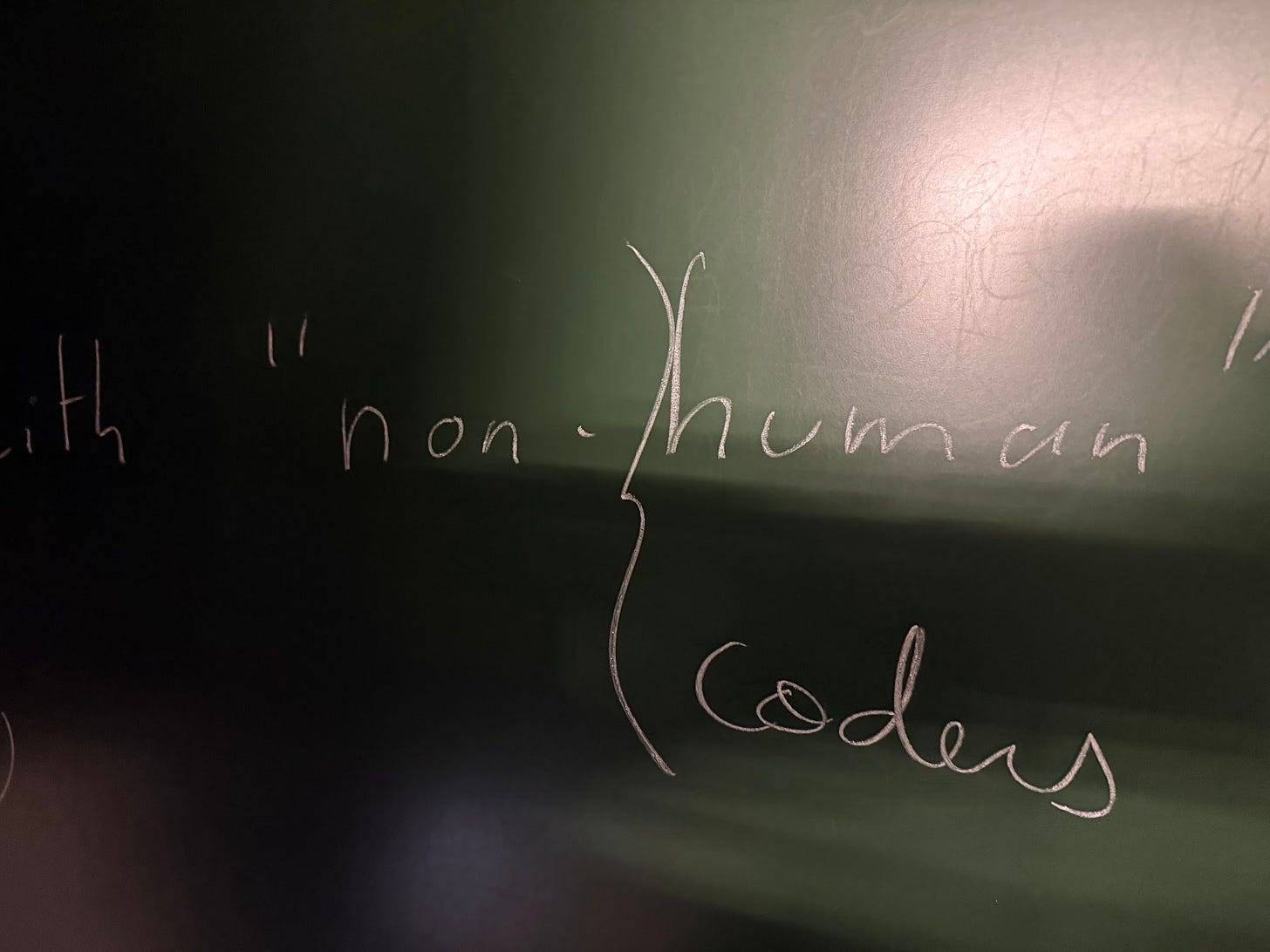

The impacts of Coding Agents were also discussed

More monorepos: Coding agents work better with a monorepo because file system access is popular in their training data set. Organizations with monorepos need a better solution to narrow down the access control to specific components. I.e. if you have a team of contractors working on /monorepo/project-a, they should not be able to view project-b or project-c. Current git-submodules solutions leave a lot of room for improvement.

Changes in Forge Designs: bug report, code review, CI, and deployment flows are all under heavy pressure as code is getting cheaper. I wrote about this not long ago.

First-class merge conflict support: Pierre-Étienne Meunier, author of Pijul VCS, one of the BoF hosts, is definitely a pioneer in this area. But the current rise in Jujutsu also proves that this can work with git in a backward-compatible manner. As we have more code, we will have more conflicts. And we want to preserve the resolution for each conflict so that they can be reused during rebase/merge and save us all from having to repeatedly prompt the agents for a fix.

Other topics, such as better UX, better education for these UX, and “how git is used in Puppet’s r10k” were also discussed in the BoF, but I was not part of those conversations to provide a recap.

Test is King

At FOSDEM, there was a story involving a CTO of a public tech company bragging about how they “vibe coded” a Redis replacement. They told the agent to iterate againt the official test suite to ensure compatibility. The result performs 60x better.

I have repeatedly mentioned in this blog how important it is to ground the Coding Agents with builds and tests. By giving it a way to imperatively validate the result, you are giving it clear acceptance criteria against which it can iterate with Chain of Thoughts.

As I shared with advanced Coding Agents users at FOSDEM about this, I realized that I was partially wrong previously about the post-agentic code review workflows.

Human review for automated tests might still be very desirable in the upcoming years.

Test code is now much more important than the implementation. Implementation is cheap because it can be generated by clankers. Test code is also getting cheaper, but it should still be more expensive than implementation. This is because it is subject to careful human reviews to help steer the implementations. Tests are the human expectation encoded to ground the agents.

These conversations with folks at FOSDEM made me recall a few things.

When Gemini-CLI first came out. I took it for a spin with a medium-sized problem. After a few minutes, it got stuck and started to have self-deprecating thoughts, just to conclude with “I should revert everything” and proceeded to delete all the progress it had made.

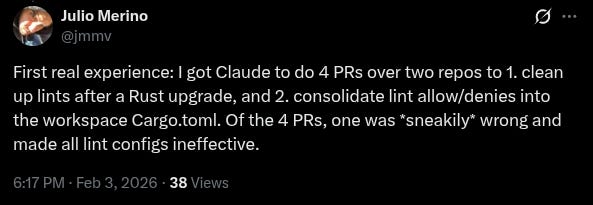

What if the rogue agents started to delete tests to bypass CI checks? Claude Code did that to me in the past.

What if it flipped the tests from expect.True to expect.False? or just add test.Skip liberally to get the code merged?

Test reviews are still very much needed until the model quality gets really, really good. And even then, there should still be a “critical” e2e behavioral tests that require human review.

SQLite is famous for being “open source, closed test” since the source code is available, but the test code is proprietary and private. This could be a new business model, replacing the existing “open-core” model in the age of Agentic Coding. Without a strong test suite, your competitor will not be able to copy your code and use a code farm of 1000 agents to build a competitive product. Well, at least not as fast and comprehensive as you can using your closed test suite.

Imagine crafting a test for running SQLite on critical infrastructure, such as an airplane or a satellite in space.

“Open Source Closed Test” still offers what people love about the OSS ecosystem: being able to view the code, edit the code, self-build, and self-host the product, while providing a strong deterrent for agentic-enabled commercial competitors from splitting your revenue and competing for margin.

Speed over IPs

This entire situation reminds me of the Chinese tech scene circa 2017-2018, before the Jack Ma crackdown. There were no Intellectual Properties rights. Internet companies such as Alibaba (my previous employer), Baidu, Tencent, etc, constantly copy each other’s features.

Alipay has mini-apps? WeChat Pay also has mini-apps.

Taobao ship livestream sales? Douyin copied and did it even better.

It was not possible to sit on technology and juice out the money for years. Chinese companies compete by shipping fast to get the First-mover advantage. Slower ones shred away the margin by offering a higher quality service at a better price. Ultimately, the Chinese consumer won.

We are seeing similar patterns among the AI labs today: little concern about IP protections. Something Claude Code shipped? Codex, Gemini-cli, Open Code, … all getting it just a few days later. The models also seem to be converging to the same set of capabilities and have started to compete for quality and price.

A New Paradigm

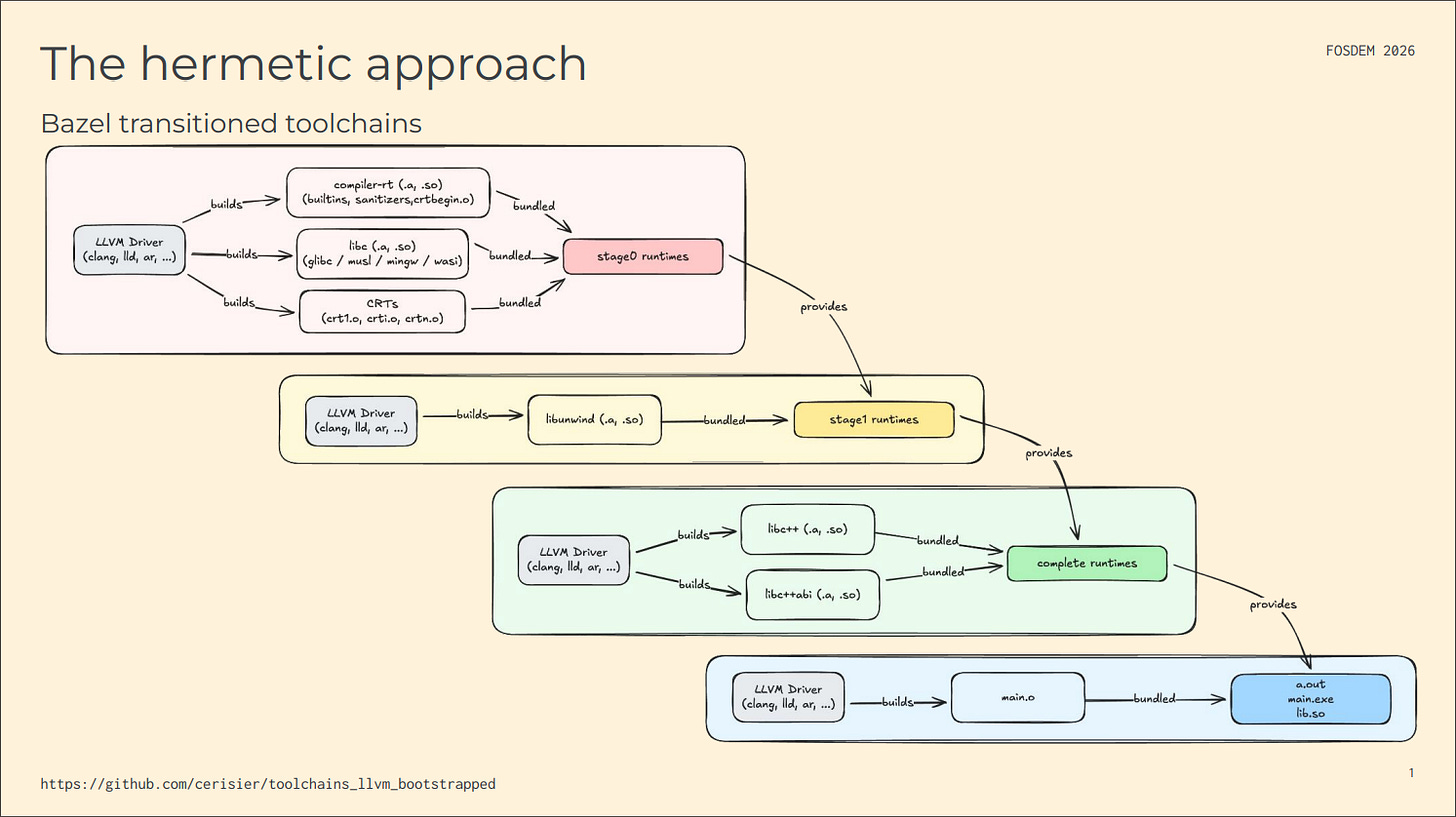

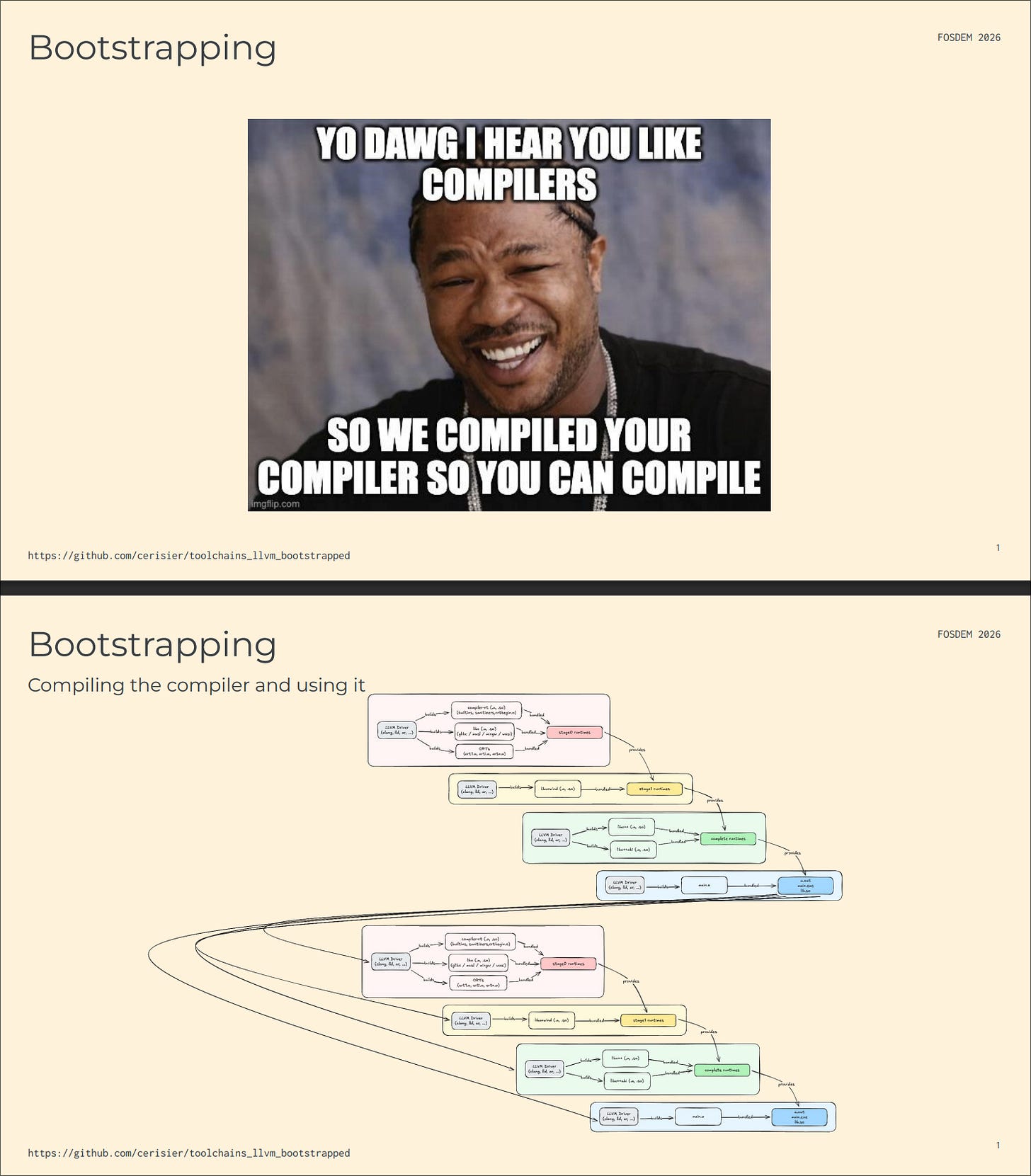

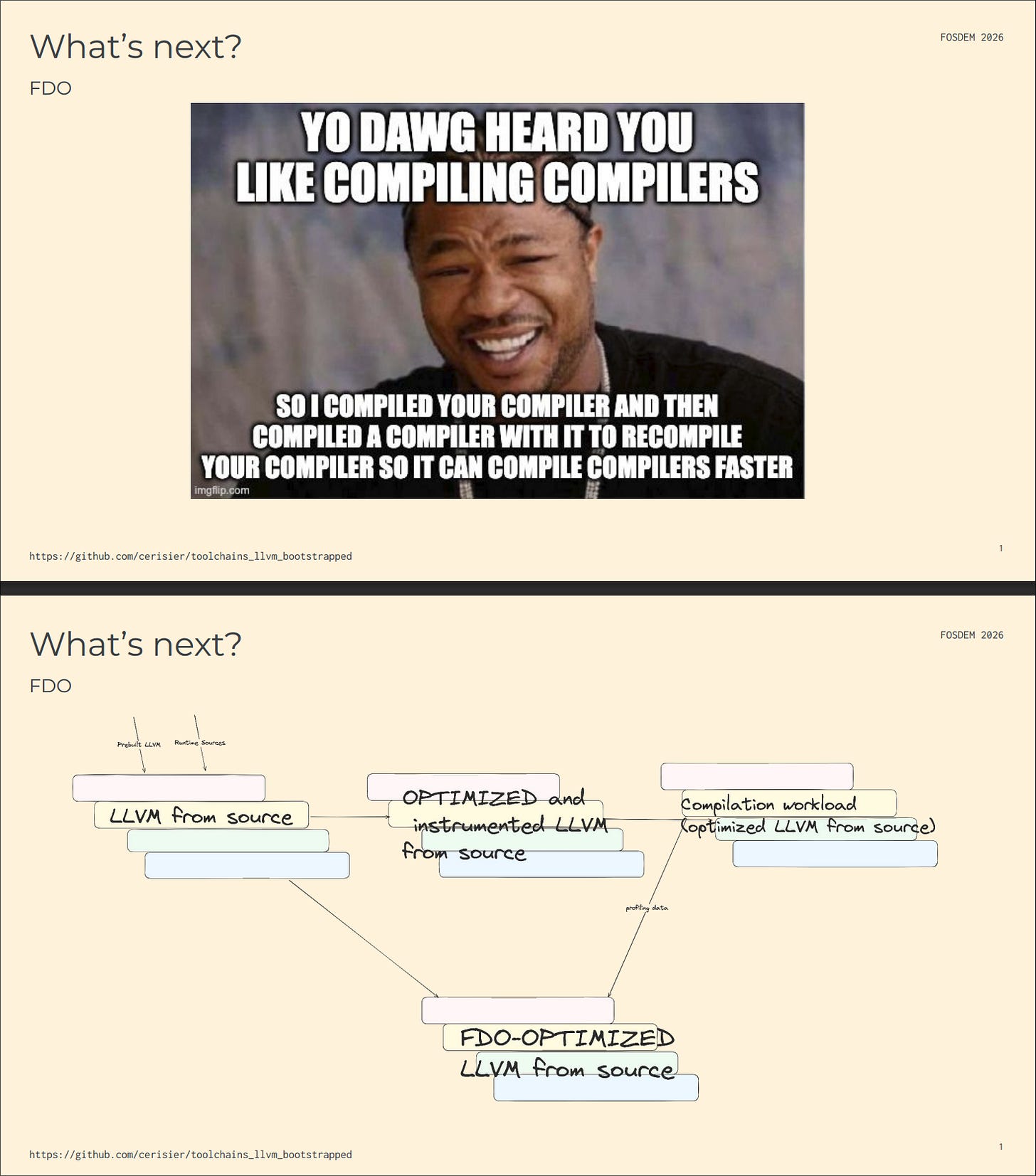

Speaking of speed, the most impressive talk at FOSDEM for me was “Zero-sysroot hermetic LLVM cross-compilation using Bazel” by David Zbarsky and Corentin Kerisit. In there, they showcased a convoluted 4-stages Bazel build graph which bootstrapped the LLVM toolchain for all the popular platforms: they built the toolchain once, then used the result to build different runtimes, then built it again with said runtime.

But they did not stop there, the talk kept on getting crazier

and crazier.

But what does this has to do anything with speed?

Bootstrapping LLVM used to take hours. David and Corentin are doing it in seconds thanks to the help of Bazel and Remote Build Execution that offload the computation to a cloud of machine with hundreds of core.

Seriously, watch the demos in their VODs!!

The project is incredibly polished. Usually, it would take several months, if not years, to get a quality project like this within the Bazel ecosystem. If you didn’t know, Bazel is hard, like hard-hard even for a senior-staff level engineer. These 2 guys did it within weeks. Over lunch, both confessed to me that they are making heavy use of coding agents to help them with various tasks. Which makes a lot of sense because, afterall, both of them are working for companies within the AI industry.

I expect this project to be the first of many. Being able to build LLVM fast is a huge game-changer for many industries to move faster. Did you know that VLC was able to ship its Windows ARM64 build earlier than everyone else, thanks to LLVM-MinGW? Did you know that AMD and Intel, and Google are spending a good chunk of their time improving MLIR, a project under LLVM, to improve their inference software stack? ZML and Modular are among many AI startups that depend on LLVM critically. Oh, and let’s not forget Google’s Tensorflow, TPU, and XLA are all being built with Bazel (or Blaze, it’s internal name inside Google).

Bazel is getting easier to use thanks to coding agents. And the coding agents are getting faster thanks to Bazel.

This has been the most fun in-person event I have attended in years. I came out of FOSDEM 26 coughing like a chicken after talking for 3 days straight. I am thankful for the tiny mint box PostgreSQL booth was giving out for free. It kept my cough manageable until the very last day. Big shoutout to the French startup/hacker community that I gotta meet on Saturday. Many characters, amazing talents.

11/10 would do it again.